Welcome to the fourth issue of the DIoC Newsletter, here we are going to learn more about Definition and Analysis of Modern Data Stack, Three Tools for Fast Data Profiling, Who’s Who in the Modern Data Stack, Four Software Engineering Best Practices to Improve Your Data Pipelines, and Key Learnings and Tradeoffs of Implementing Data Contracts. So let’s get started…

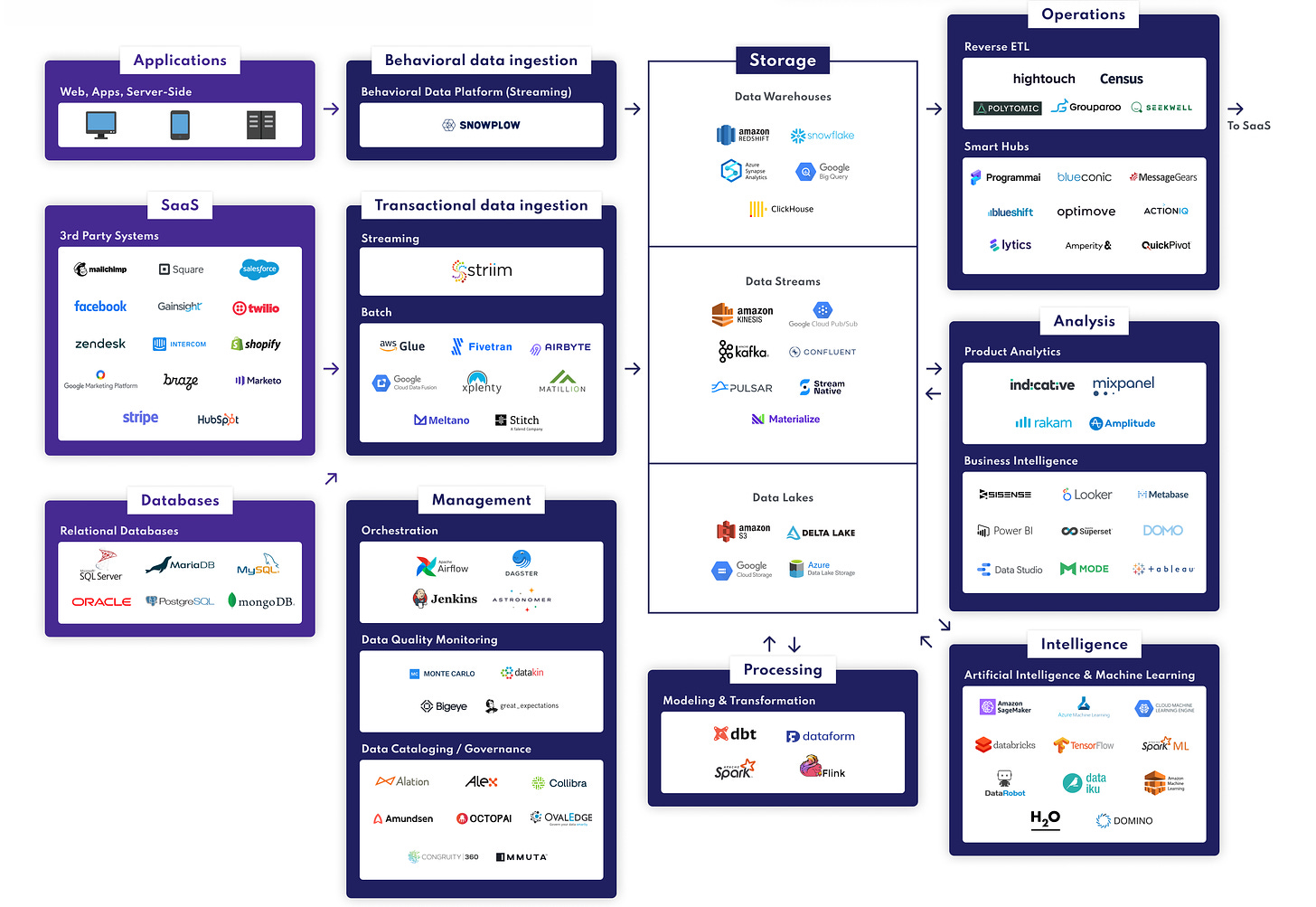

Definition and analysis of Modern Data Stack

We can define a modern data stack as a flexible set of tools and technologies that help businesses store, manage and learn from their data.

Why is the modern data stack picking up?

- Shifting to open-source

- Flexible pricing

- Need for agile analytics

- Flexibility in tech-stack

Layers in a typical modern stack data

- Data sources

- Data ingestion and/or transformation tools

- The master database(s)

- Data preparation and processing tools

- BI, ML/AI and reverse ETL tools

What's driving Modern Data Stack?

- The rise of Cloud Data Warehouses (DWH)

- Switching from ETL (Extract-Transform-Load) to EL(T): Extract – Load – (Transform)

- The growing use of self-service analytics solutions

What changes with Cloud Data Warehouse?

- Speed

- Connectivity

- User access

- Flexibility & Scalability

Modern data stack is becoming the most efficient way to utilize the latest breakthroughs in data engineering and analytics so that you can be agile in the use and development of data within your business.

Source: https://octolis.com/blog/modern-stack-data

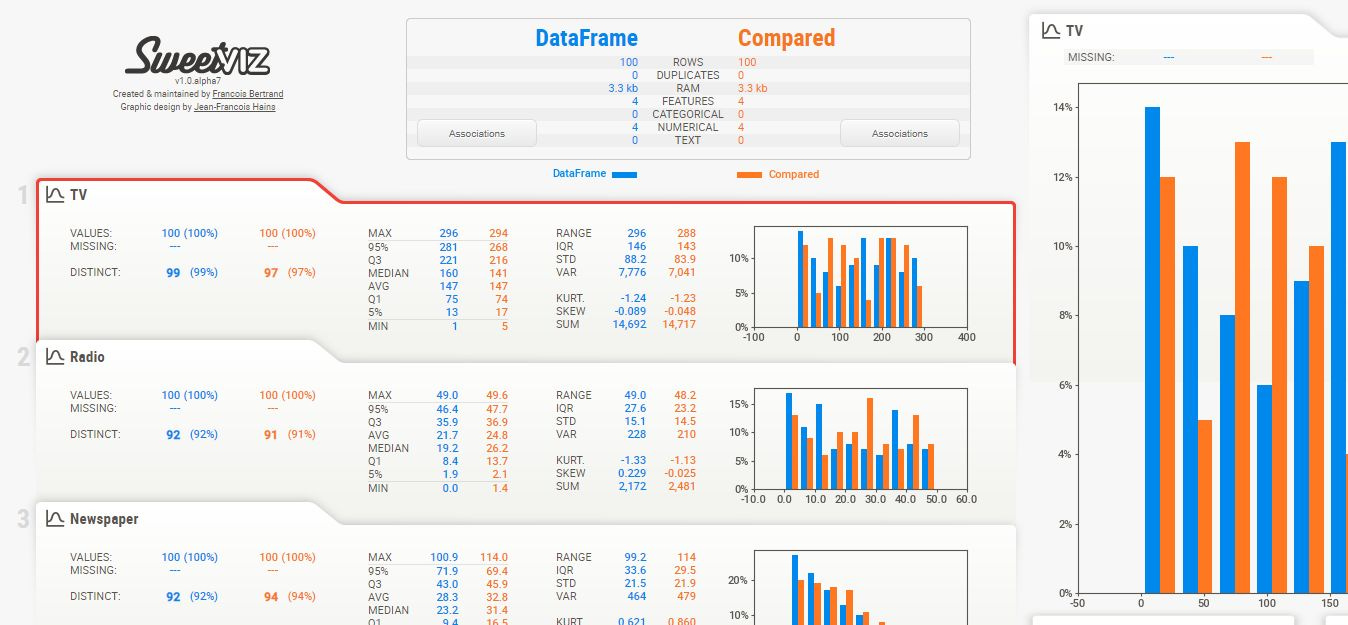

Three Tools for Fast Data Profiling

Quickly analyse and summarise your data with these Python tools

Data profiling is a form of EDA which seeks to analyse, describe and summarise a dataset to gain an understanding of both its quality and fundamental characteristics.

Many of the steps involved in data profiling are common across different datasets and projects.

As these tasks can be quite routine there are a number of open-source Python libraries that seek to automate the task of data profiling.

The three libraries covered in this article all seek to automate the routine task of profiling data before other data science techniques can be applied.

Although each tool performs a similar task they each have unique functionality.

Lux provides visual data profiling via existing pandas functions which makes this extremely easy to use if you are already a pandas user. It also provides recommendations to guide your analysis with the intent function. However, Lux does not give much indication as to the quality of the dataset such as providing a count of missing values for example.

Pandas-profiling produces a rich data profiling report with a single line of code and displays this in line in a Juypter notebook. The report provides most elements of data profiling including descriptive statistics and data quality metrics. Pandas-profiling also integrates with Lux.

Sweet-Viz provides a comprehensive and visually attractive dashboard covering the vast majority of data profiling analysis needed. This library also provides the ability to compare two versions of the same dataset which the other tools do not provide.

Source: https://towardsdatascience.com/3-tools-for-fast-data-profiling-5bd4e962e482

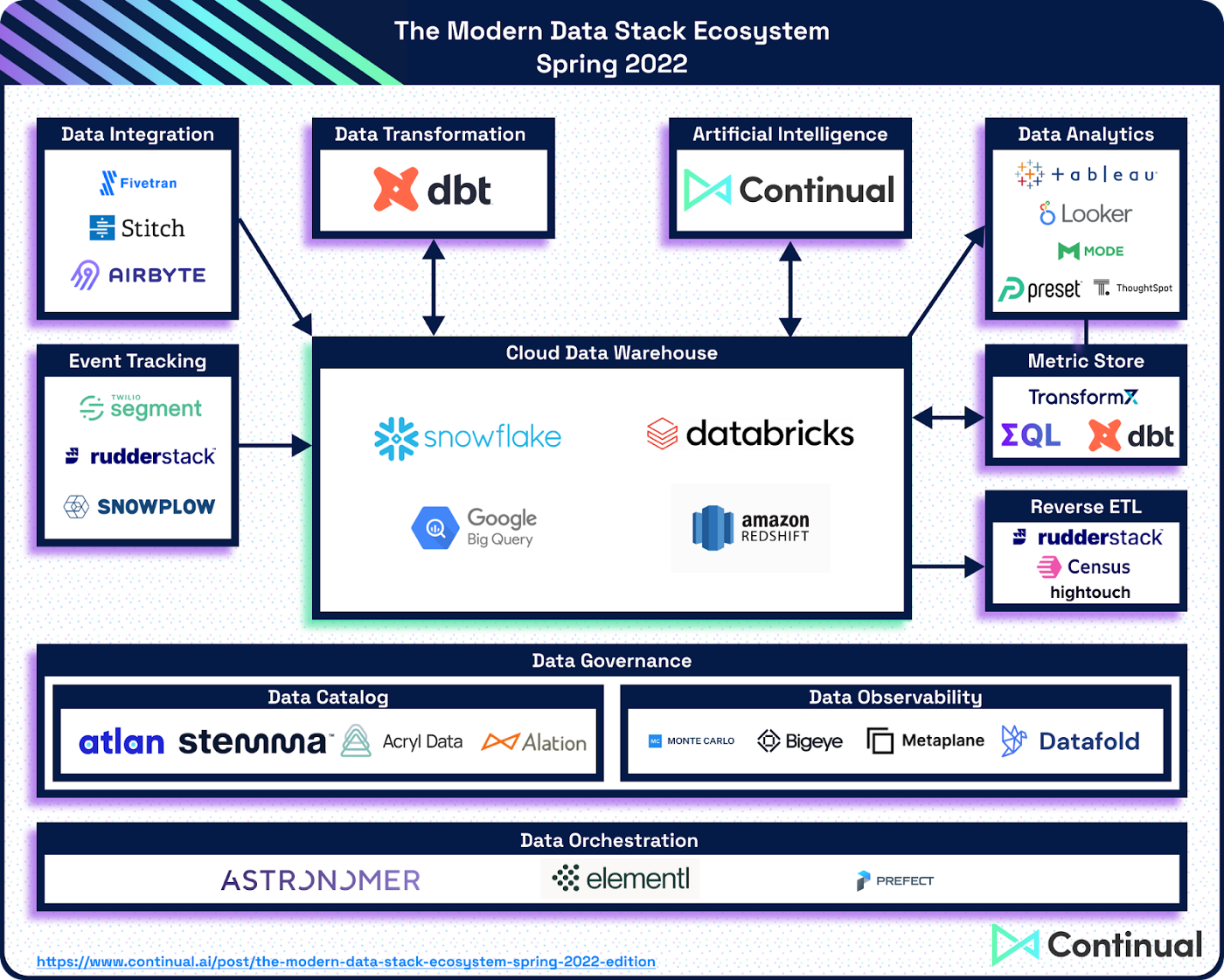

Who’s Who in the Modern Data Stack (MDS) Ecosystem (Spring 2022)

Technology for Modern Data Stack must be:

A Managed Service

Cloud Data Warehouse-Centric

Operationally Focused

Current state of Modern Data Stack

Expanding Beyond SQL

Implementing Real-Time Use Cases

Competing Against Legacy Data Stacks

Cloud Data Warehouse

- Main Tools: Snowflake, BigQuery, Redshift, Databricks

- On the Bubble: Firebolt, Dremio

Data Integration & Event Tracking

- Data Integration Main Tools: Fivetran, Airbyte, Stitch

- Data Integration On the Bubble: Hevo Data

- Event Tracking Main Tools: Segment, RudderStack, Snowplow

Data Transformation

- Main Tools: dbt Labs

Artificial Intelligence/Machine Learning

- Main Tools: Continual

Data Analytics/BI & Metrics Store

- BI Main Tools: Looker, Mode, Tableau, ThoughtSpot, Preset

- BI On the Bubble: Sigma, Lightdash, Superset, Glean

- Metrics Store Main Tools: dbt Labs, Transform, metriq

Reverse ETL/Data Operationalization

- Main Tools: Census, Hightouch, RudderStack

- On the Bubble: Hevo Data

Data Orchestration

- Main Tools: Astronomer, Elementl, Prefect

- On the Bubble: Flyte

Data Governance

- Data Catalog Main Tools: Atlan, Stemma, Alation, Acryl Data

- Data Catalog On the Bubble: Secoda, Metaphor Data

- Data Observability Main Tools: Monte Carlo, Bigeye, Datafold, Metaplane

Source: https://medium.com/@jordan_volz/whos-who-in-the-modern-data-stack-ecosystem-spring-2022-c45854653dc4

Four Software Engineering Best Practices to Improve Your Data Pipelines

From agile to abstraction, thinking about data the way we think about software can save us lots of grief.

There are some major differences between data engineering and software engineering.

Yet they’re similar enough that many of the best practices that originated for software engineering are extremely helpful for data engineering.

Data and software products are different, and their stakeholders are different.

But the essential practices of data engineering and software engineering are basically the same.

You’re writing, maintaining, and deploying code to solve a repeatable problem.

Here are some software engineering best practices you can (and should) apply to data pipelines:

1 — Set a (short) lifecycle

2 — Pick the right level of abstraction

3 — Create declarative data products

4 — Safeguard against failure

The status quo and best practices are always in flux. This applies to software engineering and it definitely applies to data engineering.

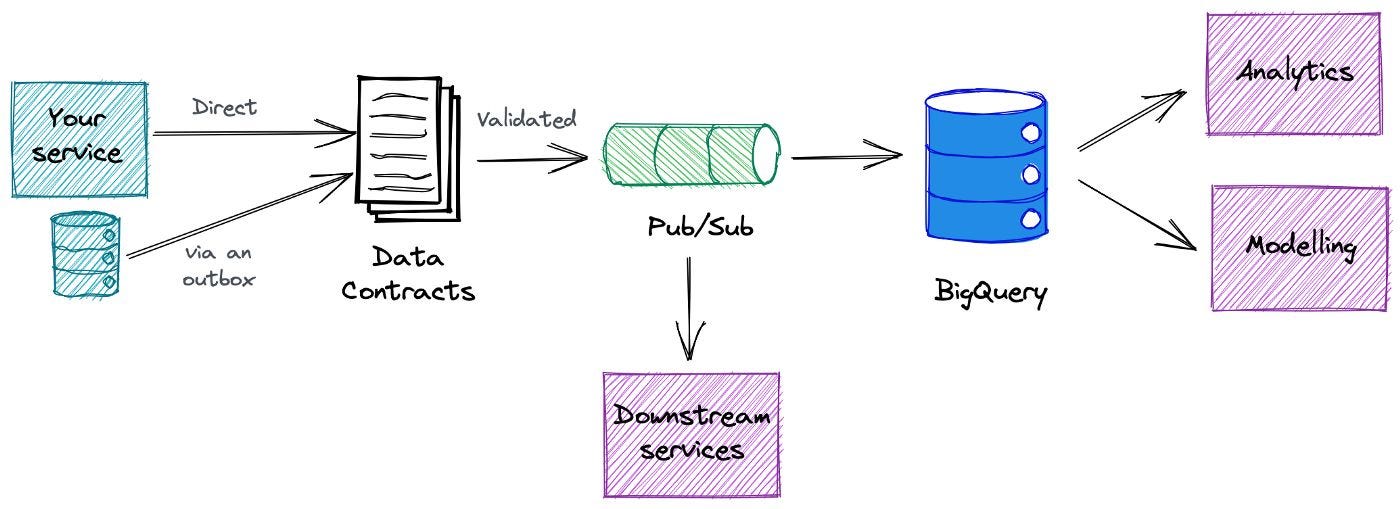

Key Learnings and Tradeoffs of Implementing Data Contracts

What are data contracts and do they make sense for your organization?

These are the data-related issues that arise from service changes made upstream:

- Data pipelines are constantly breaking and creating data quality AND usability issues.

- There is a communication chasm between service implementers, data engineers, and data consumers.

- ELT is a double-edged sword that needs to be wielded prudently and deliberately.

- There are multiple approaches to solving these issues and data engineers are still very much pioneers exploring the frontier of future best practices.

Data contracts could become a key piece of the data quality puzzle.

Key learnings from implementing data contracts:

- Data contracts aren’t modified too much once set

- Make self-service easy

- Introduce data contracts during times of change

- Roadshows help

- Bake in categorization and governance from the start, but get started

- Interservices are great early adopters and helpful for iteration

- Have the right infrastructure in place

Tradeoffs in implementing data contracts:

- Speed vs sprawl

- Commitment vs. change

- Pushing ownership upstream and across domains

Data contracts are a work in progress right now.

It is a technical and cultural change that will require commitment from multiple stakeholders.

Source: https://medium.com/@barrmoses/implementing-data-contracts-7-key-learnings-d214a5947d5e

Subscribe to my Newsletter, Follow me on LinkedIn, and never miss updates again.

What do you think about my weekly Newsletter?

If you have any suggestions or want me to feature your article, hit me up! I would love to include it in my next edition😎

Ankit Rathi is a Cloud Data Technologist, published author & well-known speaker. His interest lies primarily in building end-to-end data/AI applications/products following best practices of Data Engineering and Architecture.