Welcome to the second issue of the DIoC Newsletter, here we are going to learn more about modern data stack, the execution model of spark streaming, reverse ETL, devising robust data strategy, and using data lineage…

What Is Modern Data Stack: History, Components, Platforms, and the Future

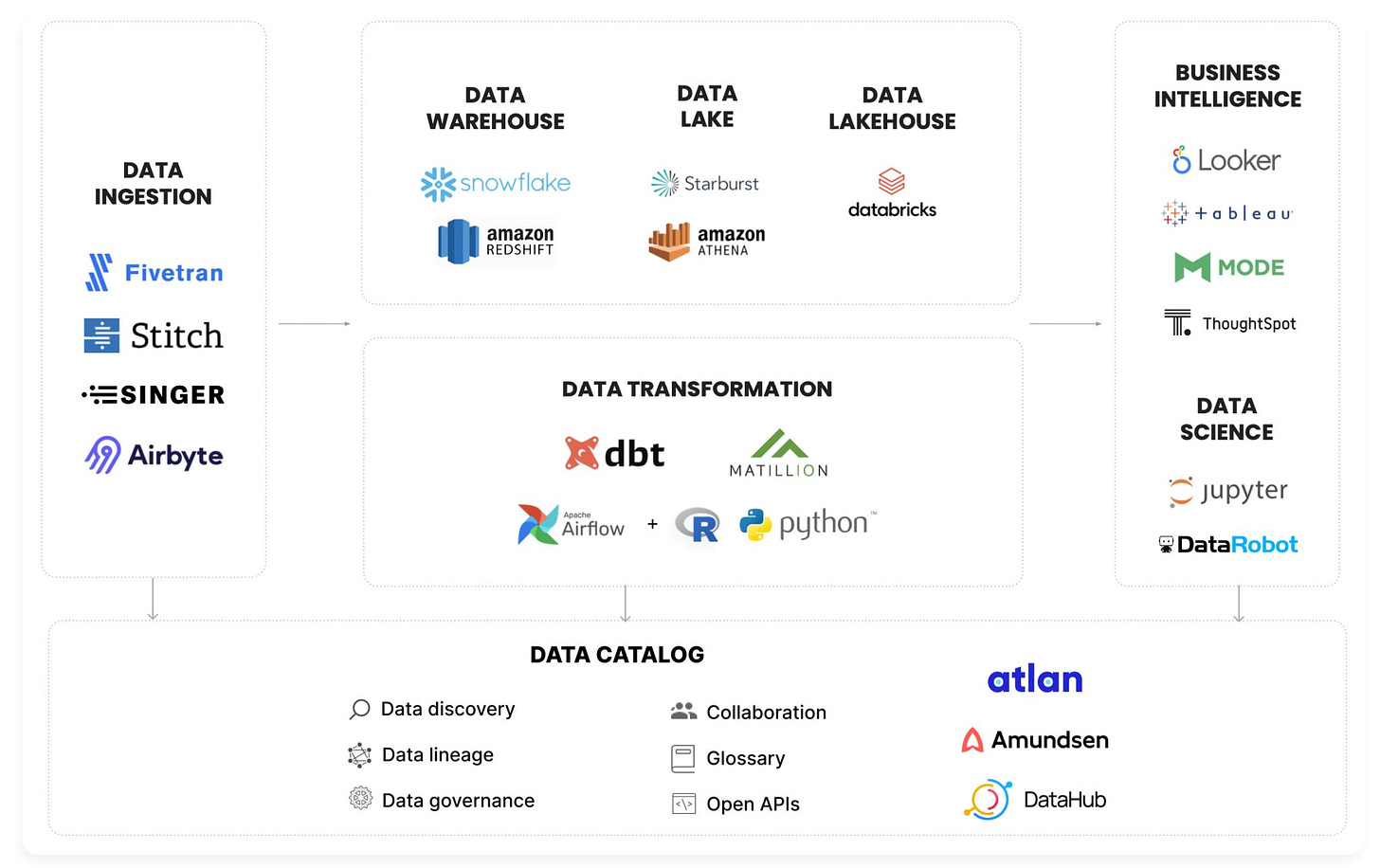

Modern data stack went through so much innovation and attention in recent times that it has become challenging to sift through the avalanche of information surrounding it.

In this article, we will find the definition of a modern data stack, the features and common capabilities of modern data stack tools, and actionable advice on how to approach tooling to meet the requirements of our data team.

With the advent of cloud DWH with MPP capabilities and primary SQL-support has not only made processing large volumes of data faster and cheaper, but it has also opened the doors for many cloud-native data tools that are low code, easy to integrate, scalable and economical, which are collectively being called as modern data stack.

What are the key characteristics of a modern data stack?

- Cloud-first

- Built around cloud data warehouse/lake

- Focus on solving one problem

- Offered as SaaS or open-core

- Low-entry barrier

- Actively supported by communities

What led to the conception of the modern data stack?

- The emergence of Hadoop and the public cloud

- The launching of Amazon's Redshift

- A growing need for better tooling

What are the fundamental components of the modern data platform?

- Data Collection and Tracking

- Data Ingestion

- Data Transformation

- Data Storage (Data warehouse/lake)

- Metrics layer (Headless BI)

- BI Tools

- Reverse ETL

- Orchestration (Workflow engine)

- Data Management, Quality, and Governance

Modern data stack tools have exponentially improved the productivity of data practitioners. At the same time, emerging MDS tools are constantly pushing the boundaries of how data is stored, processed, analyzed, and managed. It will be interesting to see how the modern data stack will evolve further to solve the next level of complexity in data.

Source: https://atlan.com/modern-data-stack-101/

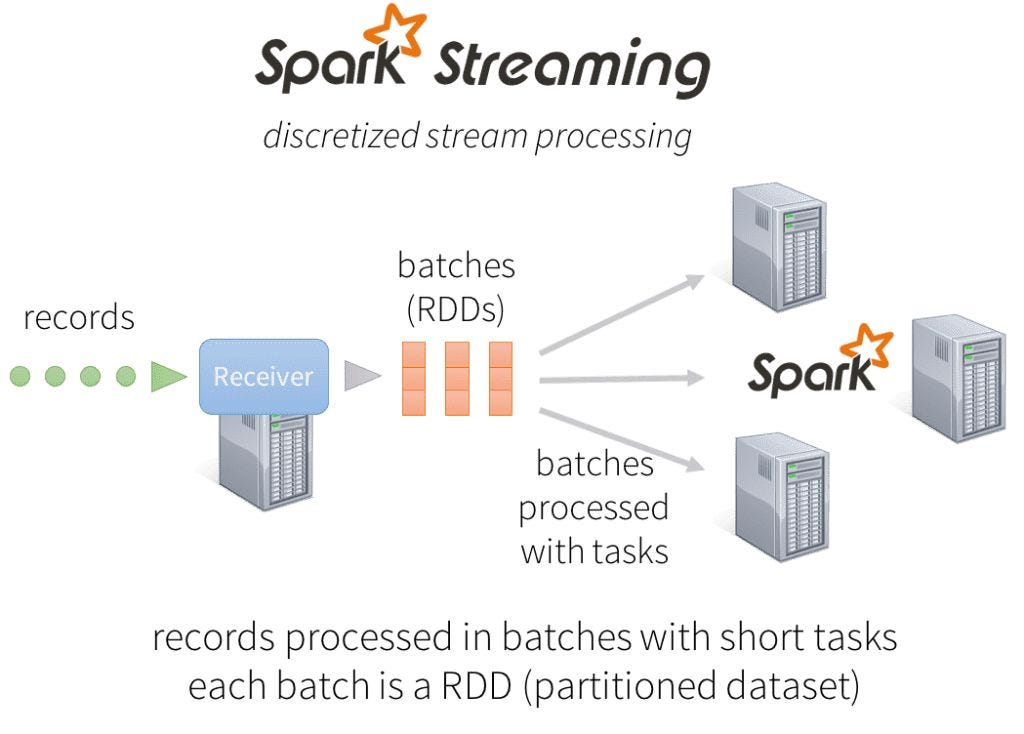

Diving into Apache Spark Streaming's Execution Model

At a high level, modern distributed stream processing pipelines execute as follows:

- Receive streaming data from data sources into a data ingestion system

- Process the data in parallel on a cluster

- Output the results out to downstream systems

To process the data, most traditional stream processing systems are designed with a continuous operator model, which works as follows:

- There is a set of worker nodes, each of which run one or more continuous operators

- Each continuous operator processes the streaming data one record at a time and forwards the records to other operators in the pipeline

- There are “source” operators for receiving data from ingestion systems, and “sink” operators that output to downstream systems

The above approach is simple but becomes challenging for larger-scale and more complex real-time analytics. Spark Streaming has been designed to meet the following requirements:

- Fast failure and straggler recovery

- Load balancing

- Unification of streaming, batch and interactive workloads

- Advanced analytics like machine learning and SQL queries

- Comparable or higher throughput to other streaming systems

Instead of processing the streaming data one record at a time, Spark Streaming discretizes the streaming data into tiny, sub-second micro-batches.

Source: https://www.databricks.com/blog/2015/07/30/diving-into-apache-spark-streamings-execution-model.html

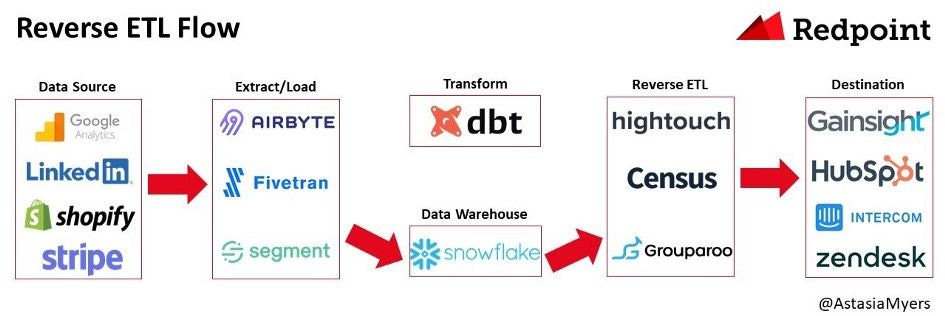

Reverse ETL — A Primer

Data ecosystem has gone through incredible transformation from ETL to ELT, and now teams are adopting yet another approach 'reverse ETL'.

Reverse ETL is the process of moving data from a data warehouse into third party systems to make data operational.

Sending data in real-time to operational systems can be helpful for making sure there is a consistent view of the customer across all systems.

Mapping fields from the data warehouse to different operational systems can take time and is challenging. And because of these challenges, reverse ETL solutions emerged.

Reverse ETL solutions are becoming a core piece of the data stack. There are now a handful of startups building reverse ETL products including Hightouch, Census, Grouparoo (open-source), Polytomic, Rudderstack, and Seekwell.

Source: https://medium.com/memory-leak/reverse-etl-a-primer-4e6694dcc7fb

Things not to miss out when devising a robust data strategy

Devising a data strategy not only encompasses technology best practice fitment but also requires a holistic overhaul of how the business needs to adopt the transformation for good.

Where organizations lack:

- Ignorance of data use cases

- Unawareness of data sources

- Lack of data literacy

- Undefined data ownership

- Lack of sponsorship

- Reactive culture

What organizations need:

- Tech enablement and cultural change

- Promoting data literacy

- Starting small with quick-wins

- Establishing a strong data foundation

- Empowering data governance

- Building architecture, frameworks, and methodologies

Take the below baby steps, and it will help facilitate the change that one is looking for:

- Start small but in line with the long-term strategic vision.

- Get buy-in for the overall strategic vision.

- Start with prioritized use cases and show the success.

- Get confidence from the stakeholders and then iterate with the next tasks.

Data Lineage and When You Should Use it

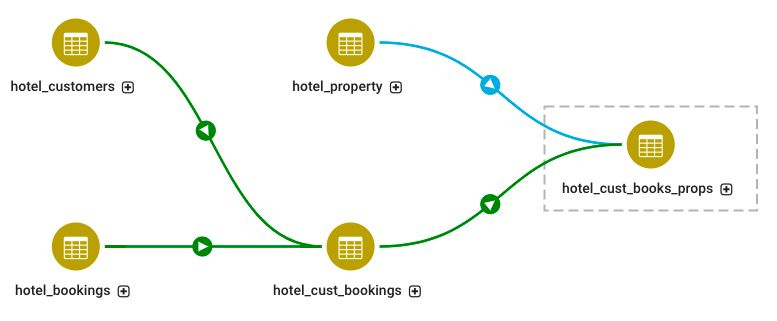

Data lineage includes methods and tools that track the lifecycle of data, showing how it flows between different IT systems and how it may be transformed in the process. It also enables users to understand the context of the data they use for decision-making and other business purposes.

Why should we apply or use data lineage?

- Traceability

- Compliance

- Maintainability

- Value

How can we achieve data lineage?

- Data tagging

- Pattern-based lineage

- Parsing-based lineage

Especially for larger companies, where a data lake not only stores huge amounts of data, but also data from hundreds or thousands of different data sources (e.g. applications), it can be difficult to know which source system the data originally came from and whether it has been processed in the meantime.

Source: https://medium.com/mlearning-ai/data-lineage-and-when-you-should-use-it-4df6a2cc1607

Subscribe to my Newsletter, Follow me on LinkedIn, and never miss updates again.

What do you think about my weekly Newsletter?

If you have any suggestions, want me to feature your article, hit me up! I would love to include it in my next edition😎

Ankit Rathi is a Cloud Data Technologist, published author & well-known speaker. His interest lies primarily in building end-to-end data/AI applications/products following best practices of Data Engineering and Architecture.